Dynamic Reconfiguration in Spire

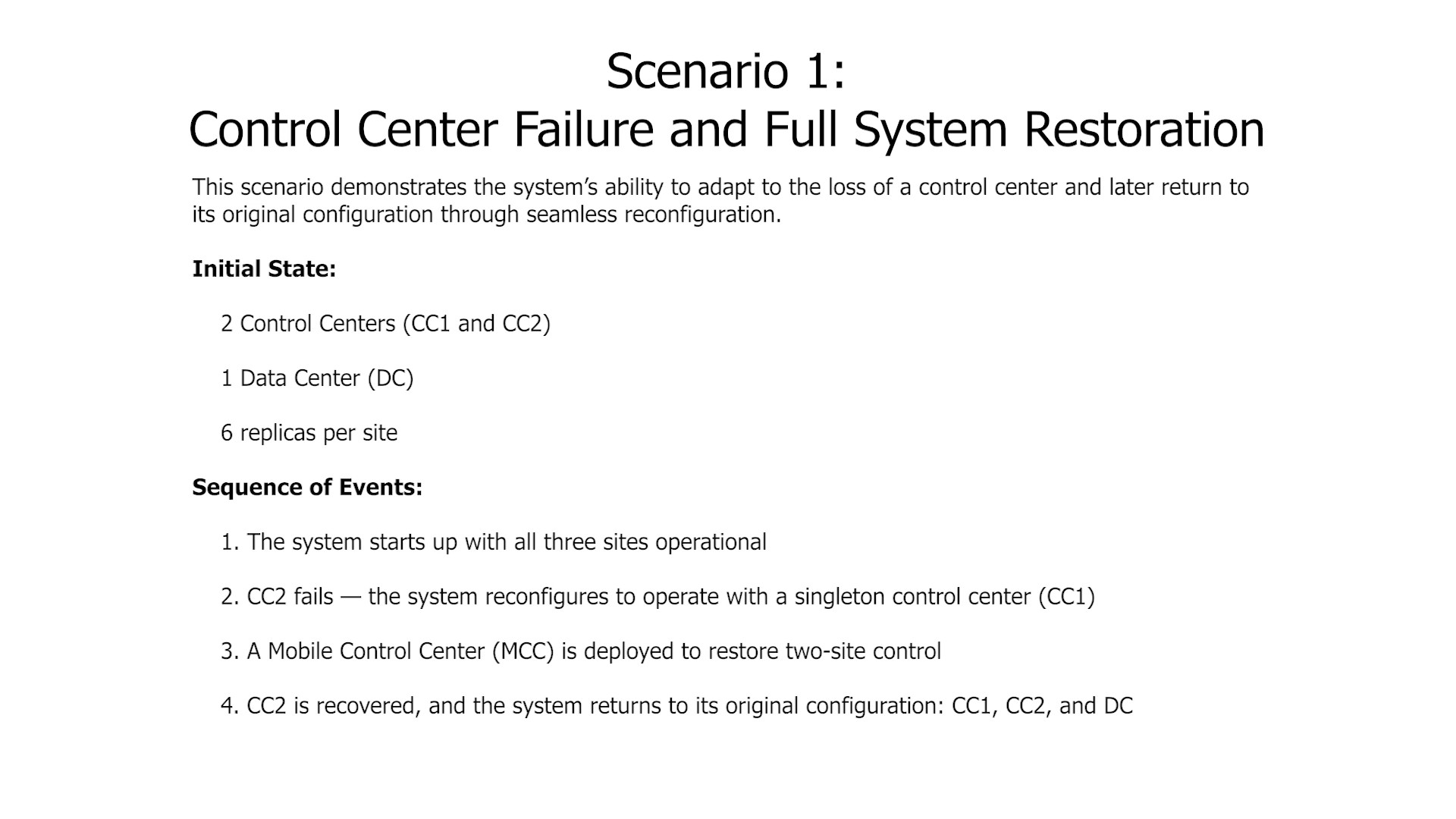

I collaborated with Professor Babay and a small team of graduate students to design a dynamic reconfiguration system for Spire, a fault-tolerant SCADA protection platform, and was solely responsible for implementing it. The driving use case is crisis response: when a control center goes offline due to a natural disaster or cyber attack, a mobile replacement needs to be brought online without taking protected infrastructure down.

The Problem

Spire's original codebase couldn't support this — compile-time constants for site counts, replica numbers, and network parameters meant any topology change required a full recompile and redeployment. There was no way to add nodes without taking everything offline.

What I Built

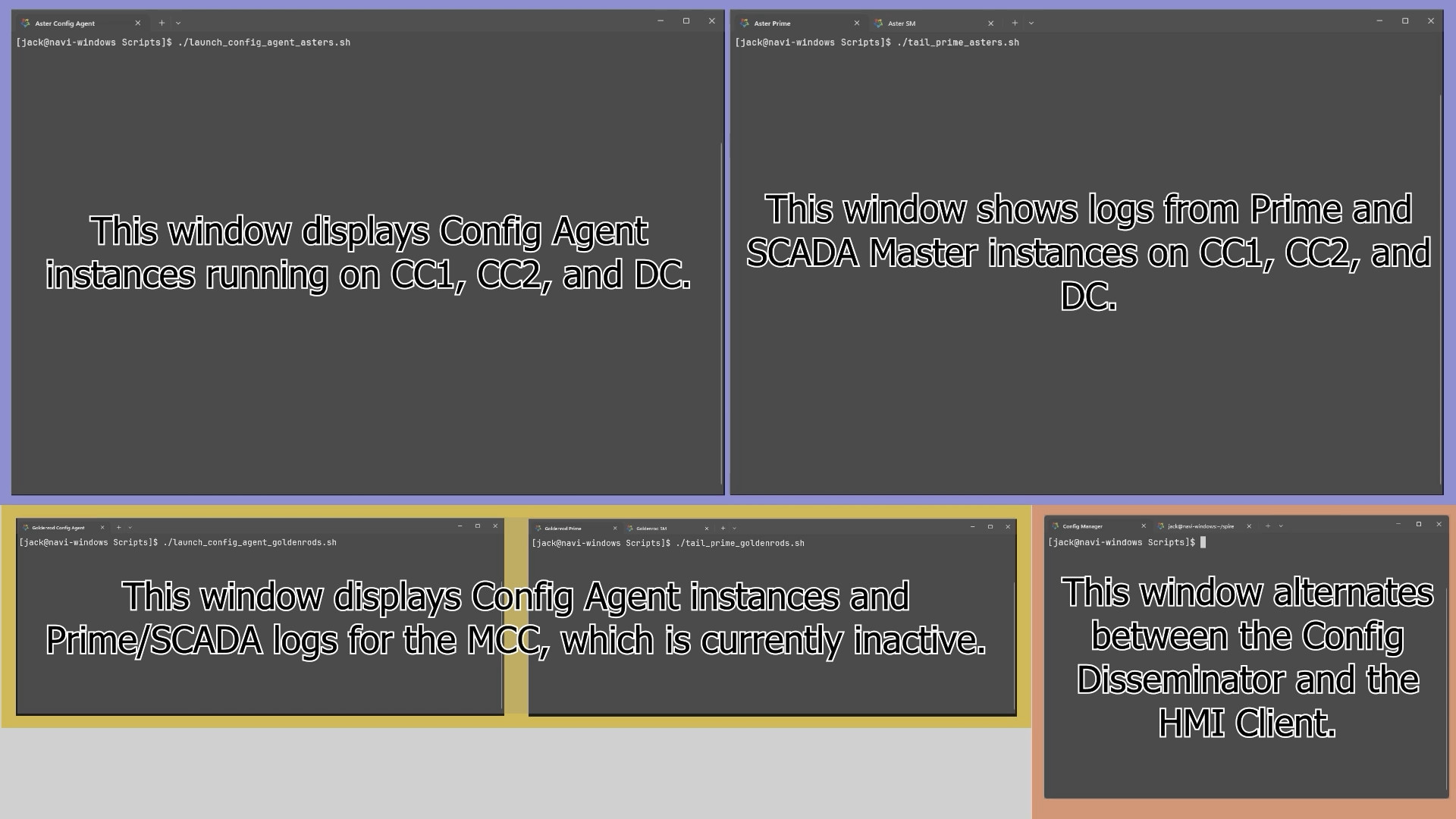

End-to-end reconfiguration pipeline. The system spans three components: a Config Manager that generates and signs new configurations, validating topology, roles, and key material before release; a Config Disseminator that reliably distributes packages across unreliable multi-site links; and a Config Agent running on every node that validates authenticity, stages changes, and coordinates local service transitions without disrupting active operations.

Custom reliable transmission protocol. Built fragmentation and fragment reassembly over Spines multicast, with periodic re-broadcast to ensure delivery across unreliable multi-site links. This was necessary because standard reliable transport assumptions don't hold across Spire's multi-site links.

26-node reproducible demo environment. To validate under realistic conditions, I built a custom Docker image that compiles the full Spire stack from source, automated all dependency and key generation steps, and designed a Docker Compose topology of 26 containers on a bridged subnet. The result is a one-command environment for demonstrating live topology changes and coordinated transitions with zero downtime.